Artificial Intelligence

Category: Generative AI

Evaluating generative AI models with Amazon Nova LLM-as-a-Judge on Amazon SageMaker AI

Evaluating the performance of large language models (LLMs) goes beyond statistical metrics like perplexity or bilingual evaluation understudy (BLEU) scores. For most real-world generative AI scenarios, it’s crucial to understand whether a model is producing better outputs than a baseline or an earlier iteration. This is especially important for applications such as summarization, content generation, […]

Implementing on-demand deployment with customized Amazon Nova models on Amazon Bedrock

In this post, we walk through the custom model on-demand deployment workflow for Amazon Bedrock and provide step-by-step implementation guides using both the AWS Management Console and APIs or AWS SDKs. We also discuss best practices and considerations for deploying customized Amazon Nova models on Amazon Bedrock.

Building enterprise-scale RAG applications with Amazon S3 Vectors and DeepSeek R1 on Amazon SageMaker AI

Organizations are adopting large language models (LLMs), such as DeepSeek R1, to transform business processes, enhance customer experiences, and drive innovation at unprecedented speed. However, standalone LLMs have key limitations such as hallucinations, outdated knowledge, and no access to proprietary data. Retrieval Augmented Generation (RAG) addresses these gaps by combining semantic search with generative AI, […]

Enabling customers to deliver production-ready AI agents at scale

Today, I’m excited to share how we’re bringing this vision to life with new capabilities that address the fundamental aspects of building and deploying agents at scale. These innovations will help you move beyond experiments to production-ready agent systems that can be trusted with your most critical business processes.

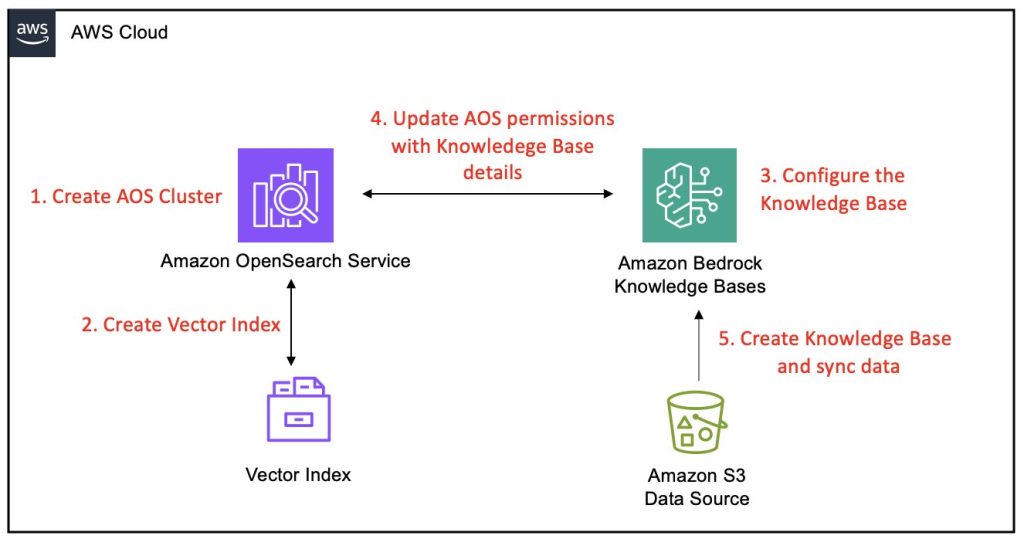

Amazon Bedrock Knowledge Bases now supports Amazon OpenSearch Service Managed Cluster as vector store

Amazon Bedrock Knowledge Bases has extended its vector store options by enabling support for Amazon OpenSearch Service managed clusters, further strengthening its capabilities as a fully managed Retrieval Augmented Generation (RAG) solution. This enhancement builds on the core functionality of Amazon Bedrock Knowledge Bases , which is designed to seamlessly connect foundation models (FMs) with internal data sources. This post provides a comprehensive, step-by-step guide on integrating an Amazon Bedrock knowledge base with an OpenSearch Service managed cluster as its vector store.

AWS doubles investment in AWS Generative AI Innovation Center, marking two years of customer success

In this post, AWS announces a $100 million additional investment in its AWS Generative AI Innovation Center, marking two years of successful customer collaborations across industries from financial services to healthcare. The investment comes as AI evolves toward more autonomous, agentic systems, with the center already helping thousands of customers drive millions in productivity gains and transform customer experiences.

Build secure RAG applications with AWS serverless data lakes

In this post, we explore how to build a secure RAG application using serverless data lake architecture, an important data strategy to support generative AI development. We use Amazon Web Services (AWS) services including Amazon S3, Amazon DynamoDB, AWS Lambda, and Amazon Bedrock Knowledge Bases to create a comprehensive solution supporting unstructured data assets which can be extended to structured data. The post covers how to implement fine-grained access controls for your enterprise data and design metadata-driven retrieval systems that respect security boundaries. These approaches will help you maximize the value of your organization’s data while maintaining robust security and compliance.

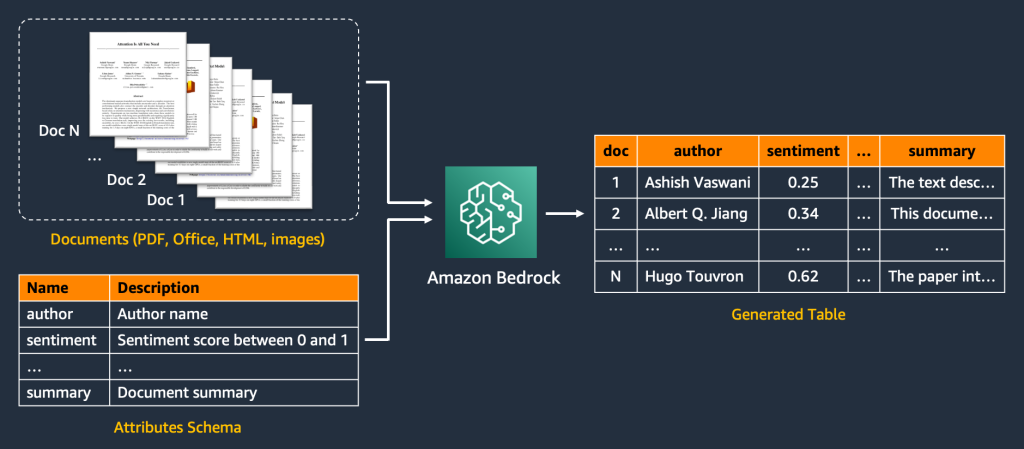

Intelligent document processing at scale with generative AI and Amazon Bedrock Data Automation

This post presents an end-to-end IDP application powered by Amazon Bedrock Data Automation and other AWS services. It provides a reusable AWS infrastructure as code (IaC) that deploys an IDP pipeline and provides an intuitive UI for transforming documents into structured tables at scale. The application only requires the user to provide the input documents (such as contracts or emails) and a list of attributes to be extracted. It then performs IDP with generative AI.

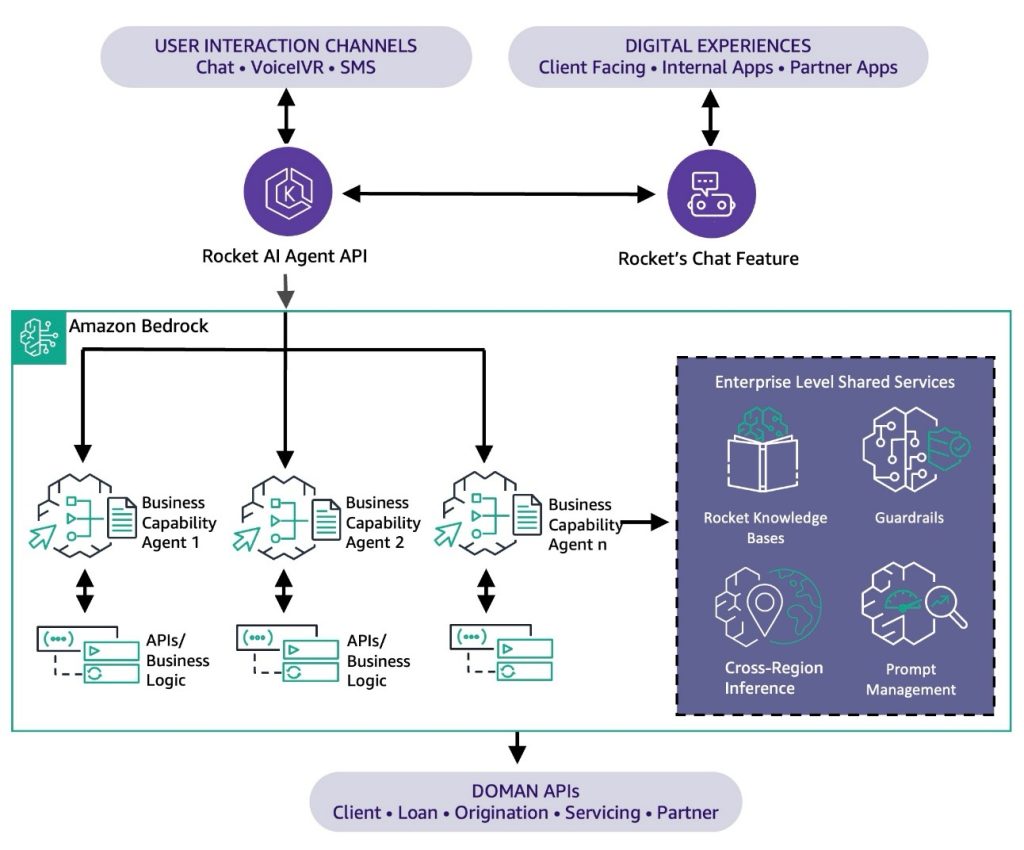

How Rocket streamlines the home buying experience with Amazon Bedrock Agents

Rocket AI Agent is more than a digital assistant. It’s a reimagined approach to client engagement, powered by agentic AI. By combining Amazon Bedrock Agents with Rocket’s proprietary data and backend systems, Rocket has created a smarter, more scalable, and more human experience available 24/7, without the wait. This post explores how Rocket brought that vision to life using Amazon Bedrock Agents, powering a new era of AI-driven support that is consistently available, deeply personalized, and built to take action.

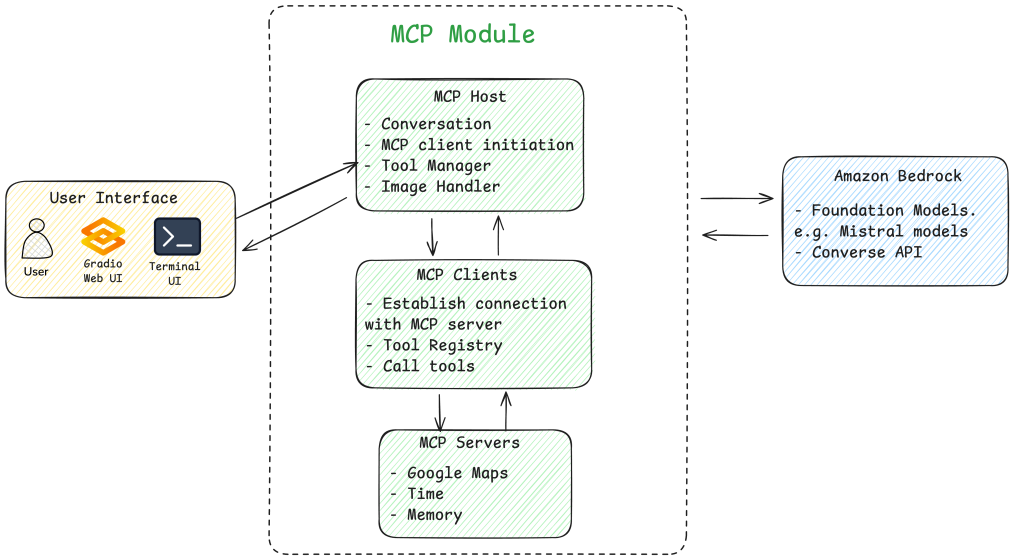

Build an MCP application with Mistral models on AWS

This post demonstrates building an intelligent AI assistant using Mistral AI models on AWS and MCP, integrating real-time location services, time data, and contextual memory to handle complex multimodal queries. This use case, restaurant recommendations, serves as an example, but this extensible framework can be adapted for enterprise use cases by modifying MCP server configurations to connect with your specific data sources and business systems.